The pretraining efficiency and generalization of large language models (LLMs) are significantly influenced by the quality and diversity of the underlying training corpus. Traditional data curation pipelines often treat quality and diversity as separate objectives, applying quality filtering followed by domain balancing. This sequential optimization overlooks the complex interdependencies between these factors. High-quality datasets frequently exhibit domain biases, while diversified datasets may compromise quality. In the context of fixed training budgets, there is a critical need to simultaneously optimize for both dimensions to maximize model performance. However, defining and jointly optimizing quality and diversity remain non-trivial challenges.

ByteDance Introduces QuaDMix

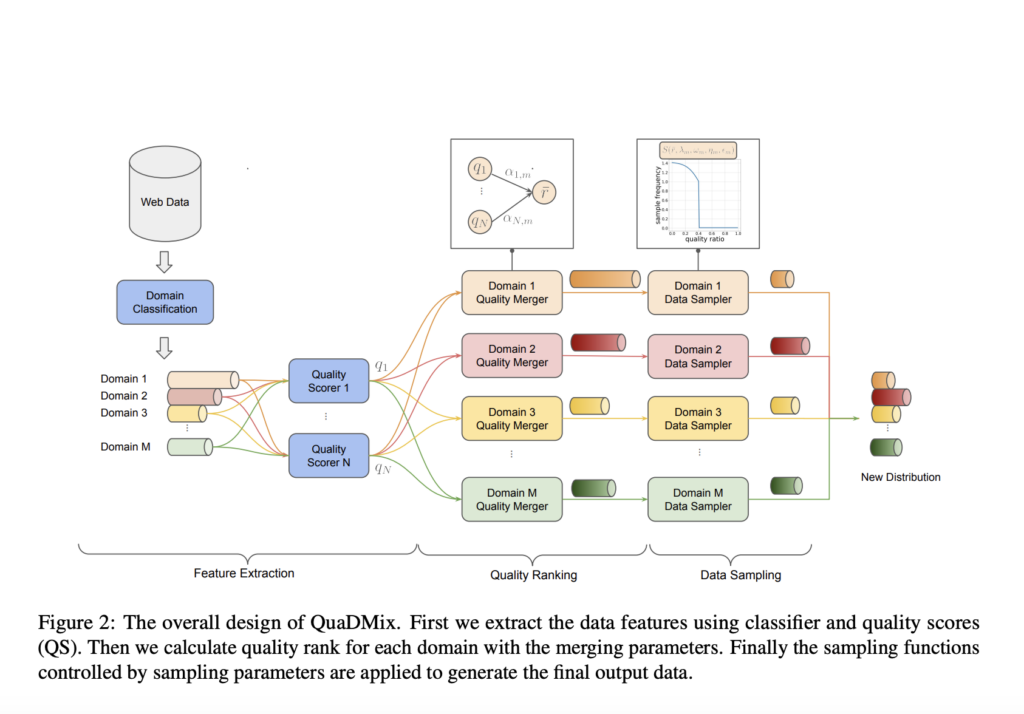

ByteDance presents QuaDMix, a unified data selection framework that systematically balances quality and diversity during LLM pretraining. QuaDMix evaluates each data sample based on multiple quality criteria and domain classifications and determines its sampling probability through a parameterized function. The framework employs proxy model experiments combined with LightGBM-based regression to predict downstream performance, enabling efficient parameter optimization without exhaustive large-scale training. Experiments demonstrate that QuaDMix achieves an average performance improvement of 7.2% across multiple benchmarks compared to methods optimizing quality and diversity separately, underscoring the effectiveness of a joint approach.

QuaDMix operates in three principal stages: feature extraction, quality aggregation, and quality-diversity aware sampling. Initially, each document is annotated with domain labels and multiple quality scores. These scores are normalized and merged using domain-specific parameters to compute an aggregated quality score. Documents are subsequently sampled according to a sigmoid-based function that prioritizes higher-quality samples while maintaining domain balance through parameterized controls.

Optimization is performed by training thousands of proxy models across different parameter settings. A regression model, trained on these proxy experiments, predicts performance outcomes, enabling identification of optimal sampling configurations. This method allows for a structured exploration of a high-dimensional parameter space, aligning data selection more closely with intended downstream tasks.

QuaDMix provides several advantages:

- Unified optimization of data quality and domain diversity.

- Adaptability to task-specific requirements through proxy evaluation target selection.

- Computational efficiency by circumventing exhaustive full-model retraining.

- Consistent downstream performance improvements without increasing compute budgets.

Experimental Results and Insights

Validation experiments were conducted using the RefinedWeb dataset, training 530M parameter models from scratch. QuaDMix was compared against several baselines, including Random Selection, Fineweb-edu, AskLLM, DCLM, DSIR, and RegMix. QuaDMix consistently outperformed these methods, achieving an average score of 39.5% across nine diverse benchmarks.

Key observations include:

- Joint optimization strategies consistently outperform isolated quality- or diversity-focused methods.

- Proxy model performance correlates strongly with large-scale model outcomes, validating the efficacy of the proxy-based approach.

- Data mixtures optimized for specific downstream tasks further enhance task performance.

- Merging multiple quality criteria reduces inherent biases and improves overall model robustness.

- Expanding token diversity beyond a certain threshold yields diminishing returns, emphasizing the importance of curated quality over sheer quantity.

Conclusion

QuaDMix offers a principled approach to data selection for LLM pretraining, addressing the longstanding challenge of simultaneously optimizing data quality and diversity. By integrating quality aggregation and domain-aware sampling within a unified framework and leveraging proxy-based optimization, QuaDMix establishes a scalable methodology for enhancing LLM pretraining efficiency. While there are opportunities for future improvements—such as refining the parameter space and enhancing proxy model fidelity—QuaDMix represents a significant step towards more systematic and effective data curation strategies for large-scale model development.

Source: https://www.marktechpost.com/